Controlling Autonomous Agents

|

| Professor Ji Liu |

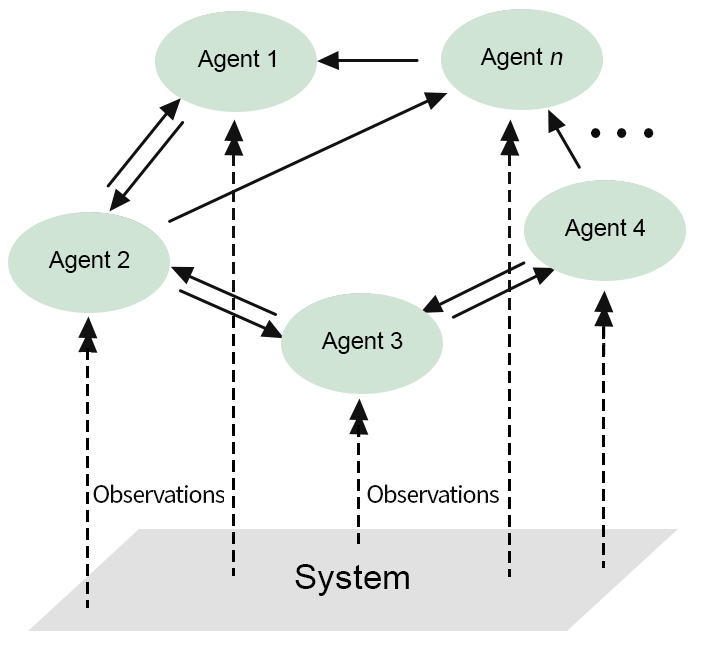

Autonomous agents are computational systems that can act independently and cooperate with other agents. In many real network applications, teams of autonomous agents with limited communication and observation capabilities must process massive amounts of heterogeneous, streaming data while simultaneously seeking (near-)optimal decision-making sequences in line with the team’s overall goals. To fulfill this need, Prof. Ji Liu and his students are working on a research project that aims to develop a theoretical framework, computational models, and scientific software tools needed to design, analyze, and test robust, resilient, communication-efficient, distributed reinforcement learning (RL) algorithms that will enable teams of agents to reliably and efficiently achieve their goals.

Previous distributed RL models have failed to account for sensing and observing capabilities of agents, and thus rely on global information, which is not readily available in distributed environments. To fill this gap, this project aims to build a revolutionary, fully distributed RL system for large-scale networked systems without using global information.

The research being led by Prof. Liu will greatly impact real-world application areas where distributed machine learning algorithms and decision-making methods are needed. Typical examples include motion planning of teams of mobile robots, and coordination of networked smart devices in an IoT environment.

Key Technical Challenges

The key technical challenges in this project include bridging the gap between the

global and local observability settings and achieving resiliency in the presence of

dynamic and untrustworthy communications. To achieve the technical objective and tackle

technical challenges, the project investigates three main thrusts. The first thrust

establishes the fundamental novel theory for the design of fully distributed RL by

approximating global information via distributed estimation. The second thrust develops

robust distributed RL algorithms against time-varying communication and sensing capabilities,

communication delays, and asynchronous updating. The third thrust designs distributed

RL algorithms that are resilient to adversaries and malicious attacks capable of introducing

untrustworthy information into the communication network, by first designing communication-efficient

RL algorithms in which each agent can transmit only low-dimensional states, and then

designing resilient information fusion/aggregation approaches for small- and even

single-dimensional cases.

The key technical challenges in this project include bridging the gap between the

global and local observability settings and achieving resiliency in the presence of

dynamic and untrustworthy communications. To achieve the technical objective and tackle

technical challenges, the project investigates three main thrusts. The first thrust

establishes the fundamental novel theory for the design of fully distributed RL by

approximating global information via distributed estimation. The second thrust develops

robust distributed RL algorithms against time-varying communication and sensing capabilities,

communication delays, and asynchronous updating. The third thrust designs distributed

RL algorithms that are resilient to adversaries and malicious attacks capable of introducing

untrustworthy information into the communication network, by first designing communication-efficient

RL algorithms in which each agent can transmit only low-dimensional states, and then

designing resilient information fusion/aggregation approaches for small- and even

single-dimensional cases.

Prof. Liu’s group is currently focusing on the question of how to reliably perform computations in a distributed manner to a large class of consensus-based problems.

When asked what has surprised him most about this problem, Prof. Liu says there is a big gap between theories and practice. Some RL algorithms work in theory but do not function well in experiments.

Students

This project promotes education and outreach activities, including broadening participation of female students in the field of machine learning, creating new courses, and designing research projects for K-12 students and undergraduates.

Three PhD students have been involved in the project, respectively focusing on RL algorithm design and analysis, resilient distributed algorithms, and asynchronous distributed algorithms. One student, Wesley Suttle, graduated in May 2022 and currently is a Distinguished Postdoctoral Fellow at the Army Research Laboratory.

The project is a good one for students because it involves a good combination of theoretical analysis and programming skills for RL which is a hot topic in both academia and industry. The project is funded by the National Science Foundation (NSF), Directorate for Computer and Information Science and Engineering (CISE).

Conclusion

Prof. Liu is well-positioned to lead the research efforts in this project with his background and strength in distributed control and optimization. His previous research focused on design and analysis of distributed control and computation algorithms for various problems such as consensus, distributed solutions to linear equations, distributed optimization, and distributed estimation for linear systems. The current project may be viewed as a natural continuation of these efforts.